Discover the hidden “Mental Model” gap that separates elite AI operators from frustrated beginners. Learn why better prompts aren’t the answer, how experts use AI to externalize rather than outsource their thinking, and the specific “Level 1” framework you need to turn generative AI from a generic toy into a high-precision cognitive multiplier.

Stop asking AI to think for you. It wasn’t built for that.

There is a strange paradox in the AI world right now.

Navigate to LinkedIn, and you’ll see “prompt engineers” selling magic spells that promise to duplicate your business. But talk to actual industry veterans, senior architects, lead strategists, specialized researchers, and you hear a different story. To them, AI isn’t magical; it’s surgical.

Why does the same tool feel like a toy to one person and a force multiplier to another?

I’ve spent the last year dissecting this divide, analyzing workflows from Reddit’s most advanced AI labs to enterprise boardrooms. The answer isn’t about valid prompts or smarter models.

It’s about Mental Models.

If you feel like AI is useless for your improved workflow, it’s not because the AI is dumb. It’s because you’re using it to think, instead of using it to externalize.

Here is the difference that changes everything.

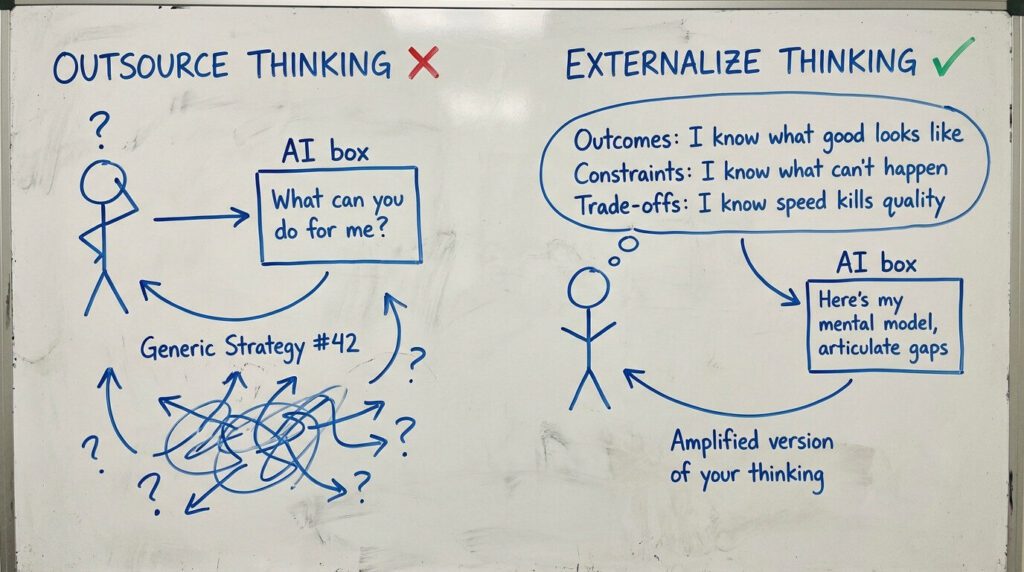

The Trap: Outsourcing vs. Externalizing

Most beginners approach AI with a “Level 0” mindset. They stare at the blinking cursor and ask:

“What can you do for me?”

They treat AI like an oracle. They want it to generate a strategy, write a novel, or code a platform from scratch. And the AI obliges, with generic, hallucinated, mediocre filler.

Experts do the opposite.

Experts don’t arrive at the prompt box empty-handed. They arrive with a packed mental suitcase:

Outcomes: They know exactly what “good” looks like.

Constraints: They know what can’t happen.

Trade-offs: They know that optimizing for speed kills quality.

The expert says: “I have a theory about market X. Here are my three constraints. Articulate the friction points I’m missing.”

They aren’t outsourcing their thinking. They are externalizing it. They are using AI to get the thoughts out of their head so they can view them objectively, critique them, and iterate.

My "Aha!" Moment

I vividly remember hitting a wall with a complex data pipeline design. I spent hours trying to prompt ChatGPT to “solve the architecture.” It kept giving me generic AWS boilerplates.

I stopped. I realized I was asking it to be the architect. I switched gears. I wrote down my mental model: “I need high throughput, but latency is irrelevant. Cost is a hard constraint.”

I fed that mental model into the AI. The result? It didn’t give me a generic solution. It gave me a specific critique of my bottleneck. It didn’t do the thinking for me; it accelerated the articulation of the thinking I had already done.

The Two Levels of AI Mastery

In the deep trenches of AI discussions (specifically the brilliant minds at r/AIMakeLab), a framework has emerged that explains this perfectly.

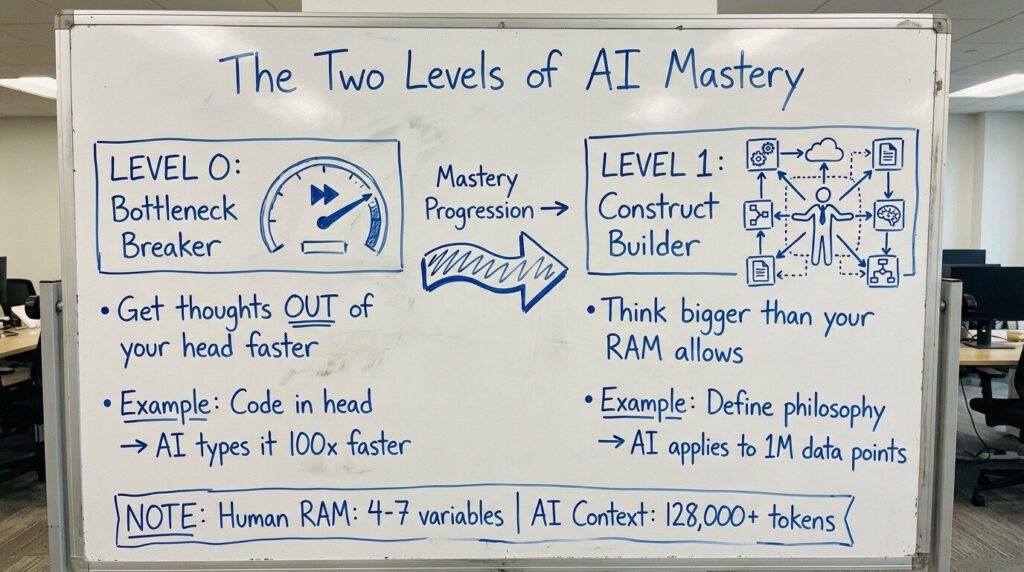

Level 0: The “Bottleneck Breaker”

This is the baseline. You have a thought, but writing it down is slow. Coding it is slow.

The Amateur: Asks AI to write the code from scratch.

The Expert: Has the logic mapping in their head and uses AI to type it out at 100x speed.

Level 0 is about speed. It’s about getting what is already in your head out into the world so it stops clogging your cognitive bandwidth.

Level 1: The “Construct Builder”

This is where the magic happens. This is “God Mode.” Level 1 is asking: “How do I think in constructs so big I can’t possibly hold them in my RAM?”

Human brains are limited. We can only hold about 4-7 variables in our working memory at once. AI has a context window of 128,000+ tokens.

When you master Level 1, you stop doing the work. You become the orchestrator of reasoning chains.

You don’t write the character; you define the semantic soul of the character, using heavy-weight concepts like “Joan of Arc” instead of “brave rebel”, and let the AI extrapolate the behavior.

You don’t analyze the data; you define the philosophy of risk you subscribe to, and let the AI apply that philosophy to a million data points.

Why "Semantic Power" is Your Secret Weapon

One of the most profound takeaways from expert discussions is the concept of Semantic Power.

Beginners use weak tokens. They ask for a “friendly tone” or a “smart analysis.” Experts use heavy tokens, words and concepts that carry massive cultural and historical weight.

Weak: “Make this character persistent.” (The AI guesses generic persistence).

Strong: “This character has the endurance of Lou Gehrig.” (The AI instantly understands: showing up every day, quiet dignity, reliability despite pain).

Experts leverage their education, their culture, and their reading to feed the AI “compressed zip files” of meaning. The AI unpacks them. If you don’t have the mental model (the reference), you can’t use the tool effectively.

This is why AI leverage scales with your knowledge, not the model’s.

The Verdict: Don't Be a Prompter, Be an Architect

If you want to move from “AI is a toy” to “AI is a weapon,” change your question.

Stop asking: “What can AI do?” Start asking: “What thinking should never stay in my head?”

The Checklist for Your Next Session:

Bring the Framework: Never open a chat until you know your constraints and outcomes.

Externalize, Don’t Outsource: Use AI to clarify your messy thoughts, not to generate thoughts for you.

Use Heavy Tokens: Don’t describe; reference. Use specific mental models (e.g., “Apply the Pareto Principle to this list”).

AI is a mirror. If you stare into it with a blank mind, you get a blank stare back. But if you stand before it as an expert, with a clear mental model and rigorous constraints, it will reflect your genius back to you, amplified a thousand times over.

That is the multiplier.